Handling a deluge of big data

Due to the sheer volume of data it will have to transport, process, store and distribute to its end users around the globe, the SKA project is considered by many the ultimate Big Data challenge. Learn below about the different parts of the data pipeline.

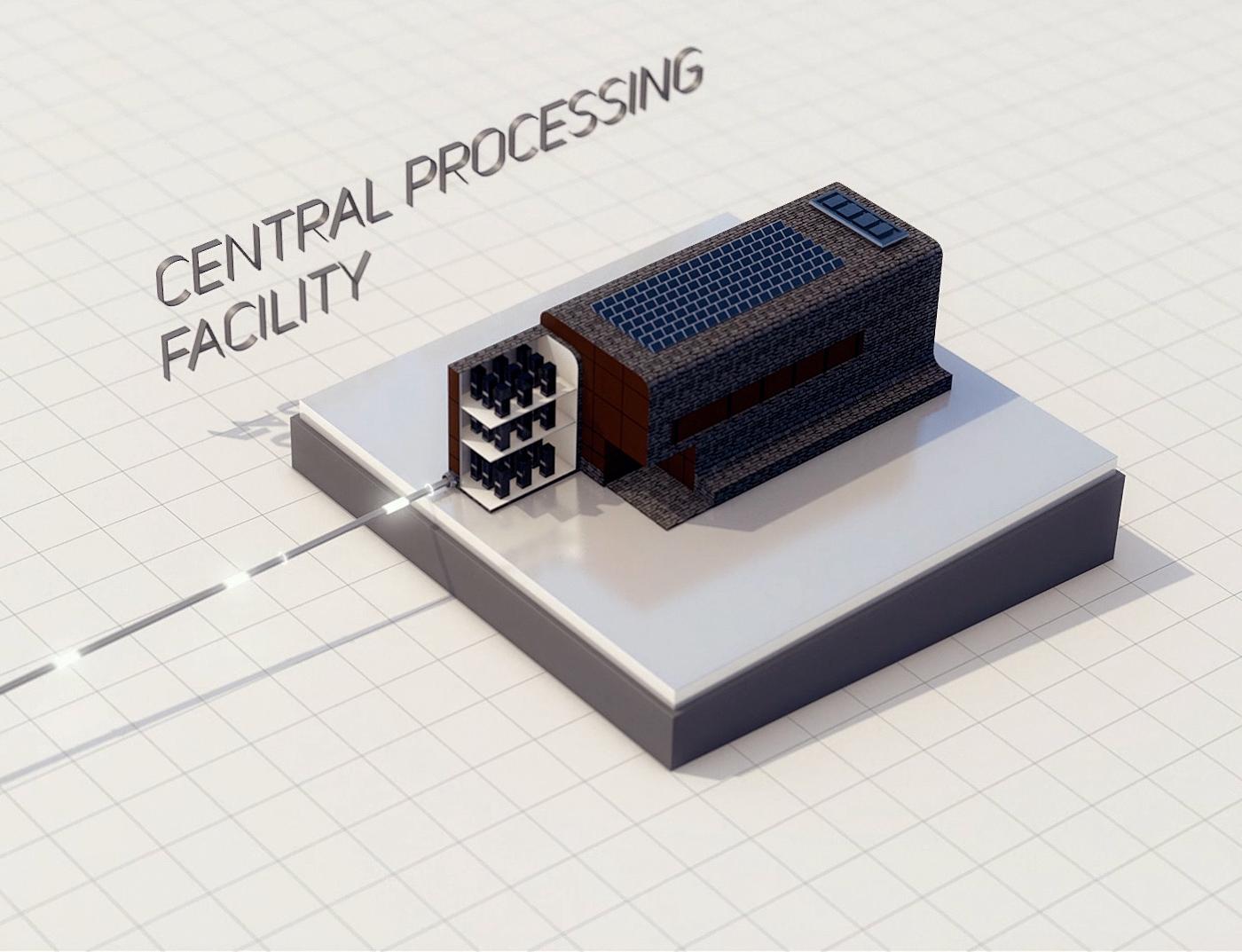

An average of 8 terabits per second of data will be transferred from the SKA telescopes in both countries to central signal processors. This is approximately 1,000 times the equivalent data rate generated by the Atacama Large Millimeter/submillimeter Array (ALMA), a joint European/USA/ East Asia facility in the Chilean Andes, and 77,000 times faster than the global average home broadband speed for 2025 (Source: Ookla). The data will travel along hundreds of kilometres of fibre-optic cables to high-performance supercomputers called science data processors, to be located in Perth and Cape Town.

Because signals from space reach each antenna at a slightly different time, the signals must first be aligned. This is done thanks to highly precise atomic clocks that timestamp the time each signal arrived. Signals are then processed in one of two ways:

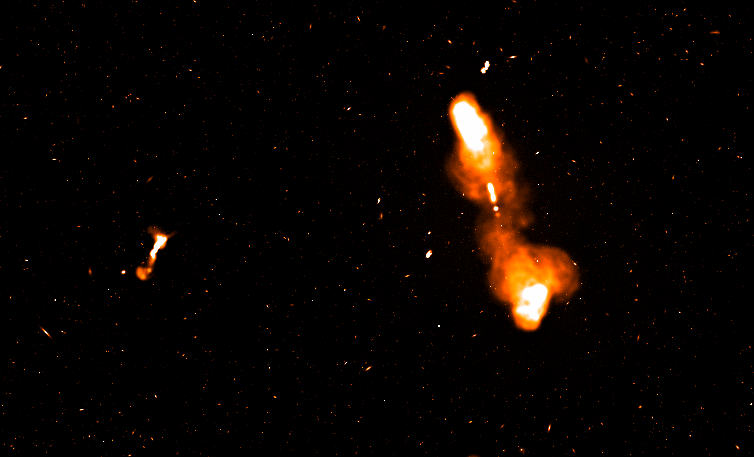

- They can be stacked. This allows the detection of transient objects like pulsars, gamma-ray bursts and fast radio bursts, but also to measure the arrival time of known signals very precisely and if a small time delay is detected, infer that a gravitational wave passed in the space between us and the object a. This is called time-domain astronomy.

- They can be multiplied. This allows the creation of images and is called image-domain astronomy.

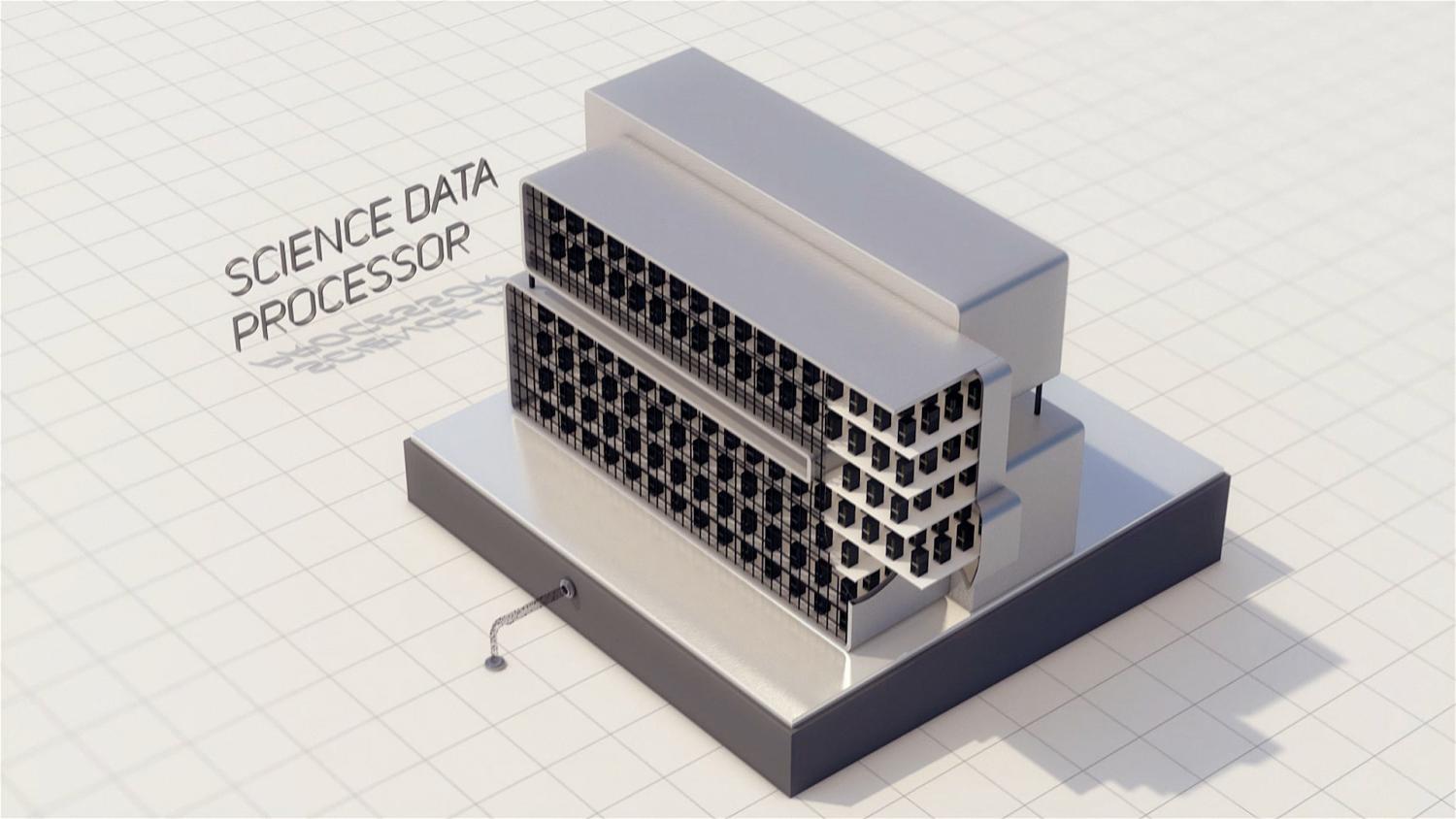

Data is then transferred to two high-performance supercomputers called Science Data Processors (SDPs). To process this enormous volume of data, the two supercomputers will each have a processing speed of 135 PFlops, which would place them among the fastest supercomputers on Earth at the present time.

In total, the SKAO will archive over 700 petabytes of data per year. This would fill the data storage capacity of about 1.5 million typical laptops every year by today’s standard.

From these supercomputers, data will be distributed via intercontinental telecommunications networks to SKA Regional Centres in the SKAO member states where science products will be stored for access by the end users, the astronomers, to conduct their science and improve our knowledge of the Universe.